This voicemail mistake could be inviting scammers—here’s how to fix it!

By

Veronica E.

- Replies 0

In today's world, our voices are more than just a way to communicate—they’re now a key to unlocking our digital lives.

But with this new convenience comes new risks.

Have you ever thought about how easy it is for someone to use your voice to impersonate you or someone you love?

One overlooked area that could be putting your security at risk is your voicemail greeting.

This simple mistake could leave you vulnerable to scammers looking to trick you or your family out of money.

Here at The GrayVine, we’re here to help you stay informed and keep your identity safe, so let’s take a closer look at why changing your voicemail greeting is an essential step to protecting yourself.

The Rise of AI Voice Cloning Scams

Imagine answering the phone and hearing a loved one’s voice on the other end, asking for urgent help or money.

Naturally, you’d want to help, right? But here’s the catch: it might not even be them.

Scammers are now using sophisticated technology to clone voices with stunning accuracy.

With the rise of artificial intelligence, voice cloning has become a dangerous tool for scammers, making it easier for them to trick you.

Why Your Voicemail Greeting Matters

It might seem harmless, but your voicemail greeting could be the very thing scammers need to start their attack.

Just a small clip of your voice can be enough for AI to create a convincing clone.

With your voice, scammers can try to bypass voice authentication or use it to deceive your family and friends into giving up personal details or money.

It’s scary to think that a simple voicemail could be putting you at risk.

Also read: AI clones daughter's voice in chilling scam – Could your family be next?

Experts Weigh In on the Threat

Cybersecurity experts are speaking out about this growing threat.

Lucas Hansen from CivAI explains that voice authentication has always had flaws, and it should never be relied on as the only security measure.

AI voice cloning, he warns, is becoming a major issue.

Experts like Truman Kain from Huntress also highlight that while direct breaches through voice verification are rare, the use of voice cloning in scams, like the "grandparent scam," is on the rise.

Nati Tal from Guardio Labs suggests we stay cautious, especially when we receive requests from familiar voices that seem out of the ordinary.

Also read: The FBI just revealed two words that signal you're being scammed—find out now!

How to Protect Yourself

To keep yourself safe from these high-tech scams, experts recommend taking these steps:

As technology continues to evolve, so do the methods scammers use to exploit it.

By staying vigilant and taking a few simple steps, you can protect yourself and your loved ones from these emerging threats.

Read next: Losing your voice? Here's the reason behind it and how to recover quickly, according to doctors

Have you or anyone you know been affected by a voice cloning scam? What steps have you taken to protect yourself? We’d love to hear your stories and tips—drop a comment below. Let’s work together to stay one step ahead of these tech-savvy scammers and keep our digital identities safe!

But with this new convenience comes new risks.

Have you ever thought about how easy it is for someone to use your voice to impersonate you or someone you love?

One overlooked area that could be putting your security at risk is your voicemail greeting.

This simple mistake could leave you vulnerable to scammers looking to trick you or your family out of money.

Here at The GrayVine, we’re here to help you stay informed and keep your identity safe, so let’s take a closer look at why changing your voicemail greeting is an essential step to protecting yourself.

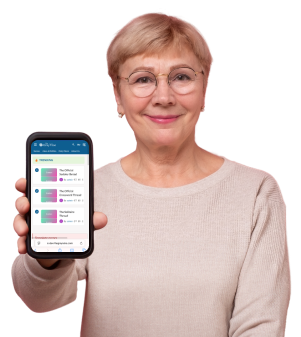

Protecting your voice: A simple change to your voicemail can help safeguard your identity from emerging scams. Image Source: Unsplash / A. C.

The Rise of AI Voice Cloning Scams

Imagine answering the phone and hearing a loved one’s voice on the other end, asking for urgent help or money.

Naturally, you’d want to help, right? But here’s the catch: it might not even be them.

Scammers are now using sophisticated technology to clone voices with stunning accuracy.

With the rise of artificial intelligence, voice cloning has become a dangerous tool for scammers, making it easier for them to trick you.

Why Your Voicemail Greeting Matters

It might seem harmless, but your voicemail greeting could be the very thing scammers need to start their attack.

Just a small clip of your voice can be enough for AI to create a convincing clone.

With your voice, scammers can try to bypass voice authentication or use it to deceive your family and friends into giving up personal details or money.

It’s scary to think that a simple voicemail could be putting you at risk.

Also read: AI clones daughter's voice in chilling scam – Could your family be next?

Experts Weigh In on the Threat

Cybersecurity experts are speaking out about this growing threat.

Lucas Hansen from CivAI explains that voice authentication has always had flaws, and it should never be relied on as the only security measure.

AI voice cloning, he warns, is becoming a major issue.

Experts like Truman Kain from Huntress also highlight that while direct breaches through voice verification are rare, the use of voice cloning in scams, like the "grandparent scam," is on the rise.

Nati Tal from Guardio Labs suggests we stay cautious, especially when we receive requests from familiar voices that seem out of the ordinary.

Also read: The FBI just revealed two words that signal you're being scammed—find out now!

How to Protect Yourself

To keep yourself safe from these high-tech scams, experts recommend taking these steps:

- Change Your Voicemail: Switch to a generic, automated greeting to reduce the chances of scammers using your voice to impersonate you.

- Limit Public Sharing: Be mindful of what you post on social media. Even small audio or video clips can be used against you. Tighten your privacy settings to control who sees your posts.

- Verify Suspicious Calls: If you receive an unexpected request, even from a familiar voice, take a moment to call them back or check with them directly before taking any action. Scammers can spoof phone numbers, so don’t trust the caller ID.

- Advocate for Stronger Security: Contact your bank and other institutions to make sure they require multi-factor authentication. Relying on voice authentication alone isn’t enough to keep your accounts secure.

As technology continues to evolve, so do the methods scammers use to exploit it.

By staying vigilant and taking a few simple steps, you can protect yourself and your loved ones from these emerging threats.

Read next: Losing your voice? Here's the reason behind it and how to recover quickly, according to doctors

Key Takeaways

- Artificial intelligence voice cloning scams are on the rise, and cybersecurity experts advise changing voicemails to help reduce the risk of fraud.

- Scammers can clone voices with just a few minutes of audio, leading to unauthorized access to accounts or convincing people to part with their money.

- Experts recommend using automated messages instead of personalized voicemails and implementing stronger multi-factor authentication measures.

- It’s important to limit the public sharing of personal audio and verify any suspicious calls, even if they seem to come from a familiar voice or phone number.

Have you or anyone you know been affected by a voice cloning scam? What steps have you taken to protect yourself? We’d love to hear your stories and tips—drop a comment below. Let’s work together to stay one step ahead of these tech-savvy scammers and keep our digital identities safe!